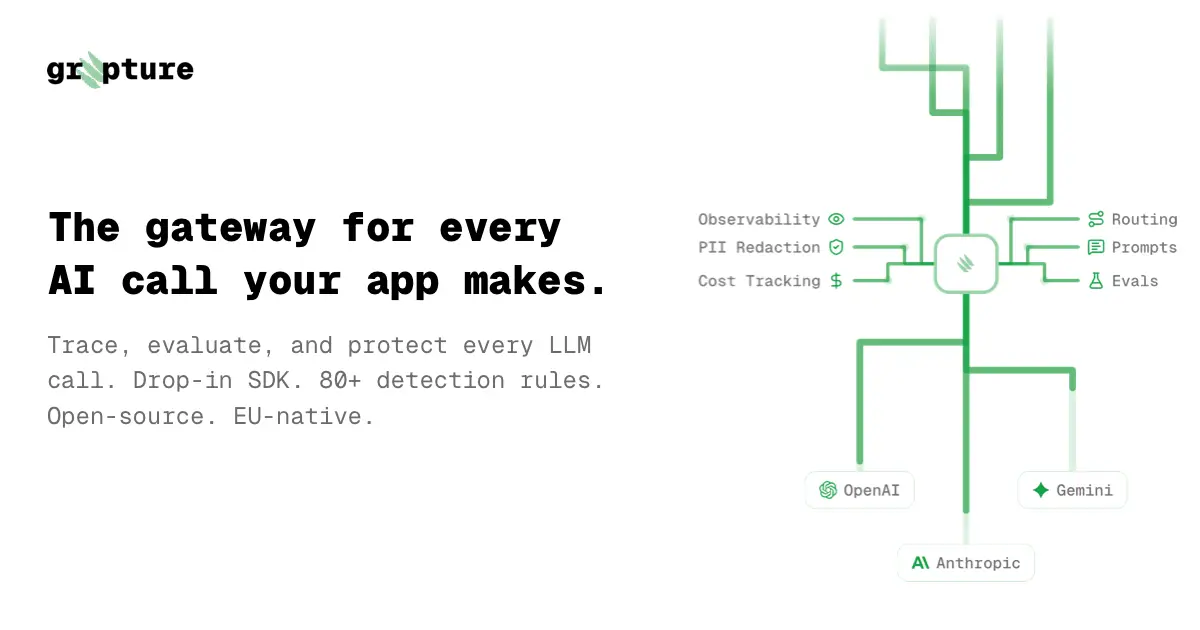

Grepture is an AI gateway that sits between your app and any LLM provider. However, you get to decide whether you add a proxy-hop and tinker with requests and responses in the hot path, or just track observability async in trace-mode.

Some of the highlights:

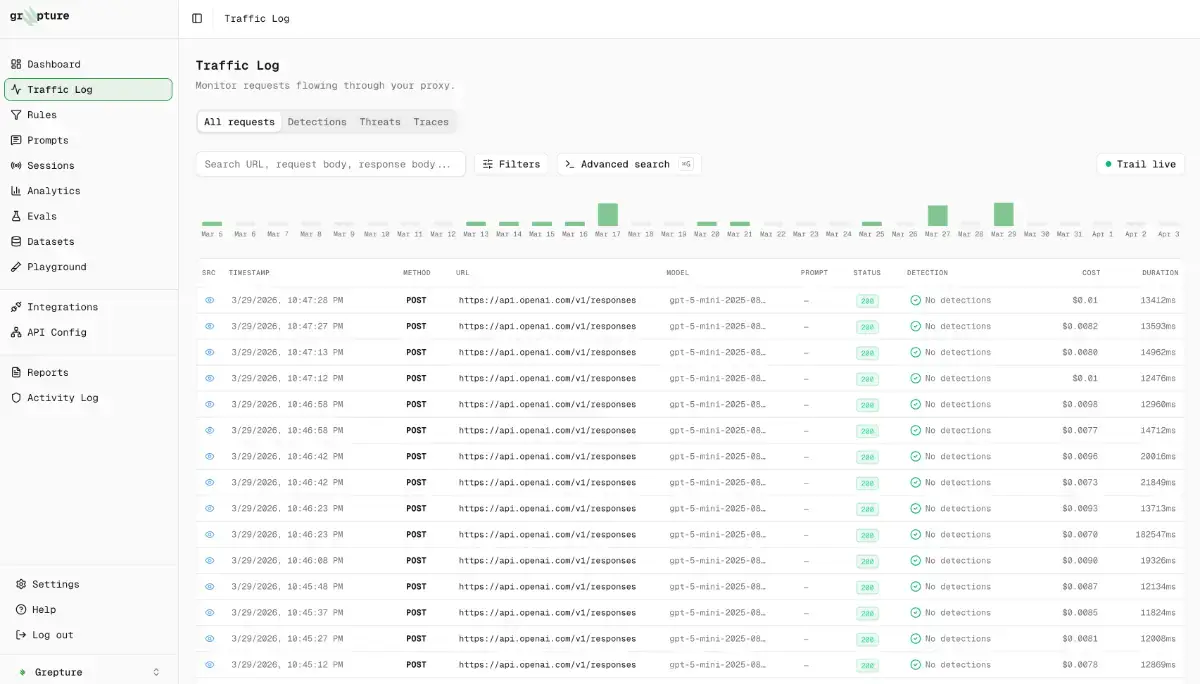

• Full traces of every request, including multi-step conversations

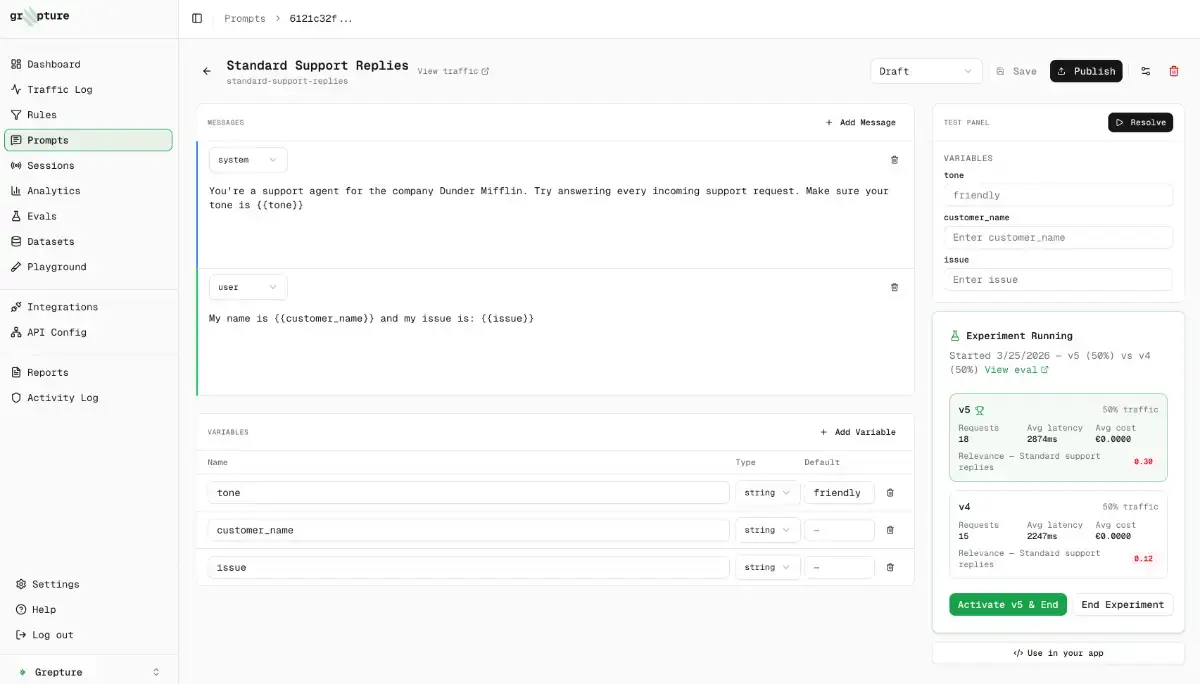

• LLM-as-a-judge evals running on live traffic and automatic dataset creation

• Automatic PII detection + redaction and rehydration before data hits the LLM

• Per-request cost and token analytics

• A zero-retention mode where prompt bodies never hit our DB (we're EU-hosted)

• CLI to pipe full dev sessions through the proxy

The proxy, CLI and SDK are open source already and the plan is to make the app available to self-host as well eventually. Right now we're focussed on making everything more useful for AI heavy products and optimize for developer experience.

User comments

No one has posted a comment yet

Please login to leave a reviewThis product has no rewards yet.

This product has no deals yet.

Visit website  benm

benm

Publisher

Launch Date

2026-05-08Category

DevelopmentPricing

FreemiumSocials

For Sale

No

Best products in the same categories